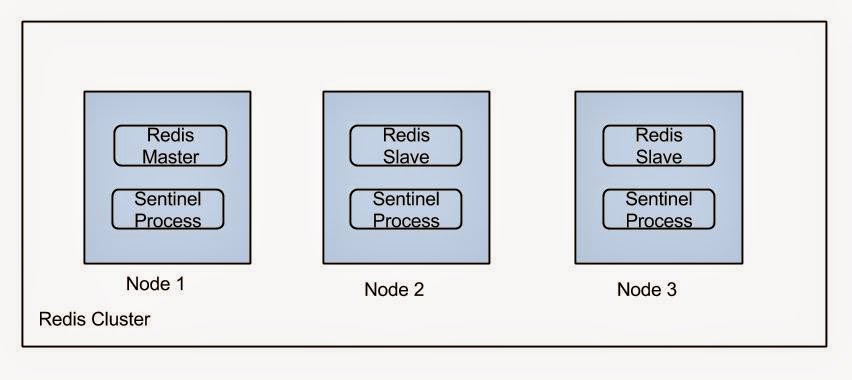

Deploying a HA Redis setup

Sentinel process takes the responsibility of electing a slave as master if a failure occurs. For more information refer this.

- Using Ubuntu repositories.

- sudo apt-get install redis-server

- Manual installation

- You can download a Redis distribution from this page http://redis.io/download. Follow the instructions on this page to setup Redis using the downloaded setup https://www.digitalocean.com/community/tutorials/how-to-install-and-use-redis

- Set the following properties to set replication on slaves.

- slaveof <masterip> <masterport>

- masterauth <master-password> //The same password we used previously

- Set masterauth property also in the master in case of master goes down and later joins as a slave (Once a slave is elected as a master).

To test whether the replication is working, log in to redis console of the master using redis-cli command and add a

data. Log in to all slaves and see the entered data is there.

- create a file named sentinel.conf where your redis configurations exists.

- add the following content to sentinel.conf (change values according to your setup).

- sentinel monitor mymaster <ip> <port> 2

sentinel down-after-milliseconds mymaster 60000

sentinel failover-timeout mymaster 180000

sentinel parallel-syncs mymaster 1It tells Redis Sentinel that the master is <ip>, the master's name is "mymaster", and start failover if more than two Redis Sentinel has detected the master failed.

Start sentinel process in each node using the following command.

sudo redis-sentinel <path to the configuration file> &

To stop a sentinel process use the following command.

sudo redis-sentinel <path to the configuration file> shutdown

To test failover you can kill master process and see still you can write to the cluster using your client

application. Not that when master changes sentinel will rewrite the redis configurations in each node. To get back

to the original state you will have to revert back the changes.

How to access above setup programmatically.

I will be using Java with Jedis library for this example.

HashSet<String> sentinels = new HashSet<>();

JedisPoolConfig jedisPoolConfig = getJedisPoolConfig();

String[] nodes = //This should contain addresses to sentinel processes;

for (String node : nodes) {

sentinels.add(node);

}

pool = new JedisSentinelPool(getConfig(sentinelMasterKey), sentinels, jedisPoolConfig);

Jedis jedis = pool.getResource(); jedis.auth(redisPassword);jedis.set(key, value);

private JedisPoolConfig getJedisPoolConfig() { JedisPoolConfig jedisPoolConfig = new JedisPoolConfig(); if (application().configuration().getInt("redis.pool.maxIdle") != null) { jedisPoolConfig.setMaxIdle(application().configuration().getInt("redis.pool.maxIdle")); } if (application().configuration().getInt("redis.pool.minIdle") != null) { jedisPoolConfig.setMinIdle(application().configuration().getInt("redis.pool.minIdle")); } if (application().configuration().getInt("redis.pool.maxTotal") != null) { jedisPoolConfig.setMaxTotal(application().configuration().getInt("redis.pool.maxTotal")); } if (application().configuration().getInt("redis.pool.maxWaitMillis") != null) { jedisPoolConfig.setMaxWaitMillis(application().configuration().getInt("redis.pool.maxWaitMillis")); } if (application().configuration().getBoolean("redis.pool.testOnBorrow") != null) { jedisPoolConfig.setTestOnBorrow(application().configuration().getBoolean("redis.pool.testOnBorrow")); } if (application().configuration().getBoolean("redis.pool.testOnReturn") != null) { jedisPoolConfig.setTestOnReturn(application().configuration().getBoolean("redis.pool.testOnReturn")); } if (application().configuration().getBoolean("redis.pool.testWhileIdle") != null) { jedisPoolConfig.setTestWhileIdle(application().configuration().getBoolean("redis.pool.testWhileIdle")); } if (application().configuration().getLong("redis.pool.timeBetweenEvictionRunsMillis") != null) { jedisPoolConfig.setTimeBetweenEvictionRunsMillis(application().configuration().getLong("redis.pool.timeBetweenEvictionRunsMillis")); } if (application().configuration().getInt("redis.pool.numTestsPerEvictionRun") != null) { jedisPoolConfig.setNumTestsPerEvictionRun(application().configuration().getInt("redis.pool.numTestsPerEvictionRun")); } if (application().configuration().getLong("redis.pool.minEvictableIdleTimeMillis") != null) { jedisPoolConfig.setMinEvictableIdleTimeMillis(application().configuration().getLong("redis.pool.minEvictableIdleTimeMillis")); } if (application().configuration().getLong("redis.pool.softMinEvictableIdleTimeMillis") != null) { jedisPoolConfig.setSoftMinEvictableIdleTimeMillis(application().configuration().getLong("redis.pool.softMinEvictableIdleTimeMillis")); } if (application().configuration().getBoolean("redis.pool.lifo") != null) { jedisPoolConfig.setLifo(application().configuration().getBoolean("redis.pool.lifo")); } if (application().configuration().getBoolean("redis.pool.blockWhenExhausted") != null) { jedisPoolConfig.setBlockWhenExhausted(application().configuration().getBoolean("redis.pool.blockWhenExhausted")); } return jedisPoolConfig; }

Comments

Post a Comment